Fangqiang Ding

Postdoctoral Associate @ Massachusetts Institute of Technology

about me

I am a Postdoctoral Associate at MIT, working with Dr. Hermano Igo Krebs, director of The 77 Lab. Before MIT, I worked with Dr. Or Litany at Technion as a Postdoctoral Fellow. I was honored to be awarded a 2025 RSS Pioneer for my work on robust spatial perception for mobile robotics. I received my Ph.D. in Robotics and Autonomous Systems from School of Informatics, The University of Edinburgh, supervised by Dr. Chris Xiaoxuan Lu, and my B.Eng. in Mechanical Engineering from Tongji University.

🎯 My research agenda centers on Physical AI, which integrates advanced artificial intelligence with physical systems (e.g., self-driving cars, robots, wearables, industrial and IoT devices) to enable them to perceive, reason, and interact with the physical world. My long-term vision is a human-machine symbiotic ecosystem where human and embodied intelligence coexist, collaborate and co-evolve.

🚀 Achieving this vision requires systems that are not only increasingly capable, but also trustworthy and scalable in the real world. Trustworthy systems operate safely, reliably, and robustly across diverse environments and tasks, while protecting human privacy. Scalable systems should be developed and deployed at scale in a cost-effective way through affordable sensing, computation and data pipelines. My research aims to address the real-world challenges that hinder the trustworthy and scalable Physical AI.

💡 My current research directions include (but not limited to):

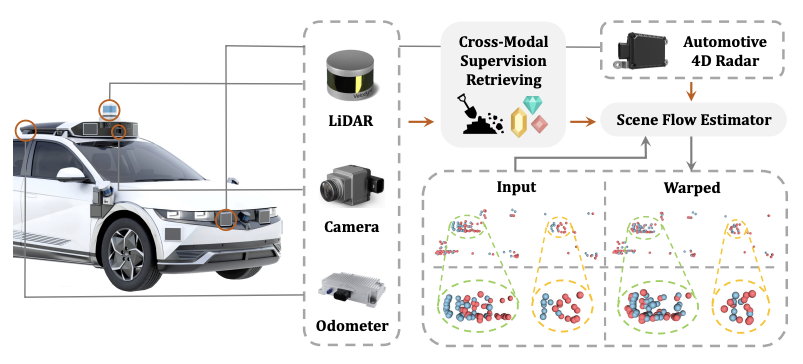

- Multisensory Intelligence for reliable mobile autonomoy in the wild

- Generalized and non-intrusive human motion and interaction sensing

- Long-horizon robotic (loco-)manipulation across tasks and environments

- Generative scene and data synthesis for robot learning

🤝 If you are interested in these directions and would like to explore collaboration opportunities, please feel free to reach out via email. Email · Resume · Google Scholar

news

| Feb 21, 2026 | 🎉 Two papers (M4Human, PALM) accepted to CVPR-2026. See you in Denver CO. |

|---|---|

| Oct 01, 2025 | 📖 Started to work as a Postdoctoral Associate at MIT. Look for more collabrations. |

| May 13, 2025 | 🎓 Successfully pass my PhD thesis viva. Many thanks to the committee and collaborators. Finally become Dr. Ding! |

| Apr 21, 2025 | 🤖 Selected as an RSS Pioneers 2025 (competitive early-career recognition from the robotics community). See you in Los Angeles, USA. |

| Feb 24, 2025 | 🎉 One paper accepted to ACM SenSys’25. See you in Irvine, USA. |

| Jan 27, 2025 | 📖 Accept to serve as Associate Editor for IROS-2025. Look forward to contribute. |